|

During the training phase, the DRL agent is trained to follow the virtual guides in our simulated environments so as to obtain rewards. Virtual guidance is designed as a virtual lure for the DRL agent. We introduce “ virtual guidance ”, a simple but effective way to communicate the navigation path to our DRL agent (i.e., the AGV). Our experimental results show that the proposed methodology is able to guide our AGV to pass through crowded environments, and navigate it to the destination with high success rates both in indoor and outdoor environments.

The concept of virtual guidance is introduced in the following section. Virtual guidance enables our DRL agent to be guided by the planner module by setting up intermediate virtual waypoints along the AGV’s path. In order to guide the DRL agent to navigate to the destination while avoiding obstacles, we introduce a new concept called “virtual guidance” in our autonomous system. Navigation and obstacle avoidance, on the other hand, is performed by our planner module together with a DRL agent trained in virtual environments simulated by the Unity engine. In our system, visual perception and localization are performed by our perception and localization modules using the image frames captured by the RGB camera. We embrace techniques such as semantic segmentation with deep neural networks (DNNs), simultaneous localization and mapping (SLAM), path planning algorithms, as well as deep reinforcement learning (DRL) to implement the four functionalities mentioned above, respectively. Only a single RGB camera mounted on the AGV is used as input. In this project, we propose to overcome the above challenge by using several state-of-the-art techniques to exclude the need for any high-end sensors.

Although such devices provide solutions to the above requirements, they are mostly expensive and thus are unable to satisfy the need for mass production. In order to meet these requirements, conventional AGVs rely heavily on sensor inputs obtained from devices such as high-end cameras, LIDARs, GPSes, and other localization sensors. A visual-based autonomous navigation system typically requires four essential functionalities: visual perception, localization, navigation, and obstacle avoidance. Potential Challenges in Modern Autonomous Navigation SystemsĪlthough autonomous systems are promising and are expected to have numerous potential applications, developing a practical one is not a straightforward and easy task.

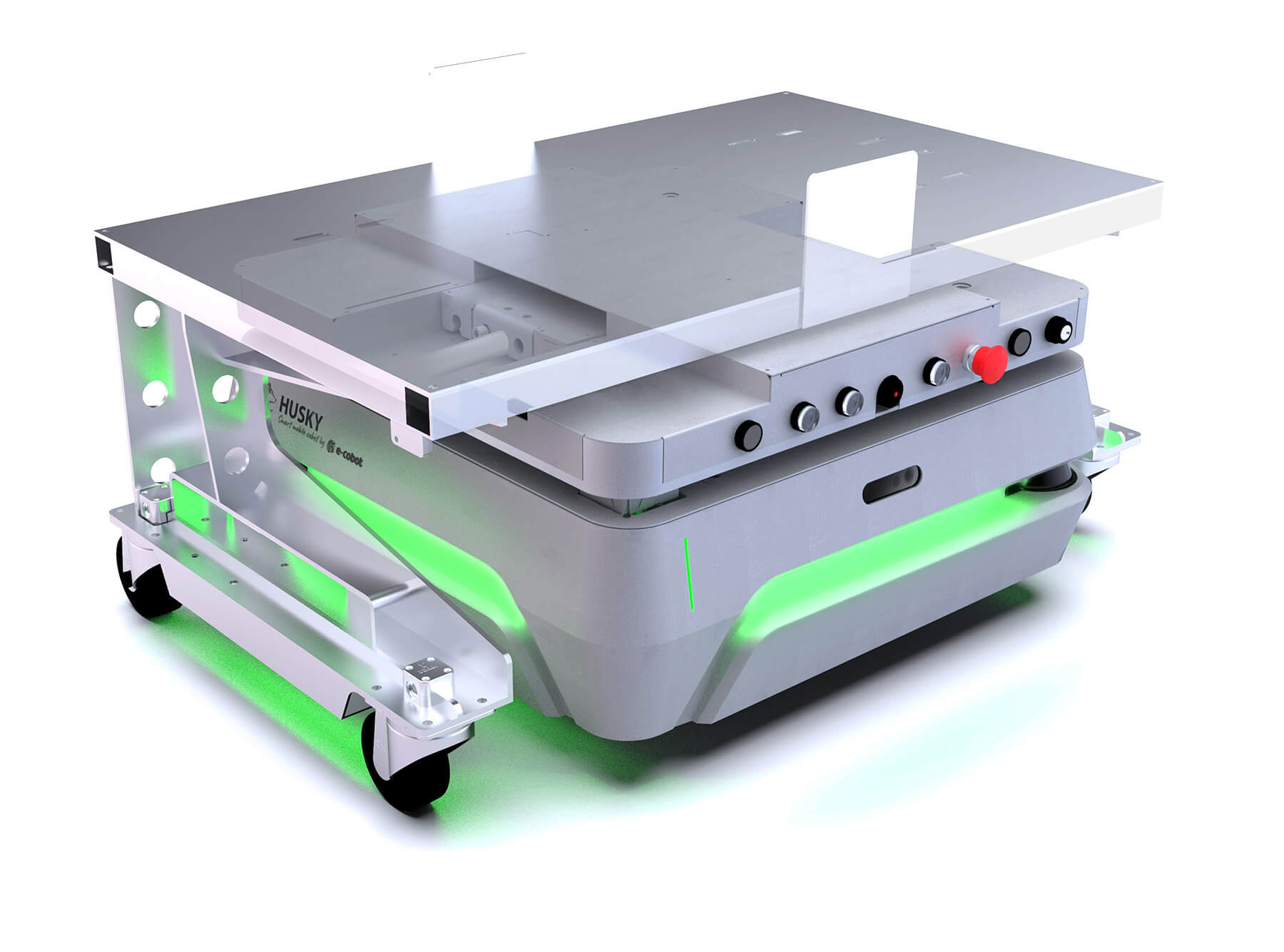

Autonomous navigation has been attracting attention in recent years, especially for maneuvering and controlling autonomous guided vehicles (AGVs) which include self-driving cars and delivery robots.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed